When there are problems with the Boomi deployment that do not seem to be related to Boomi itself, please use the following contacts in order of escalation:

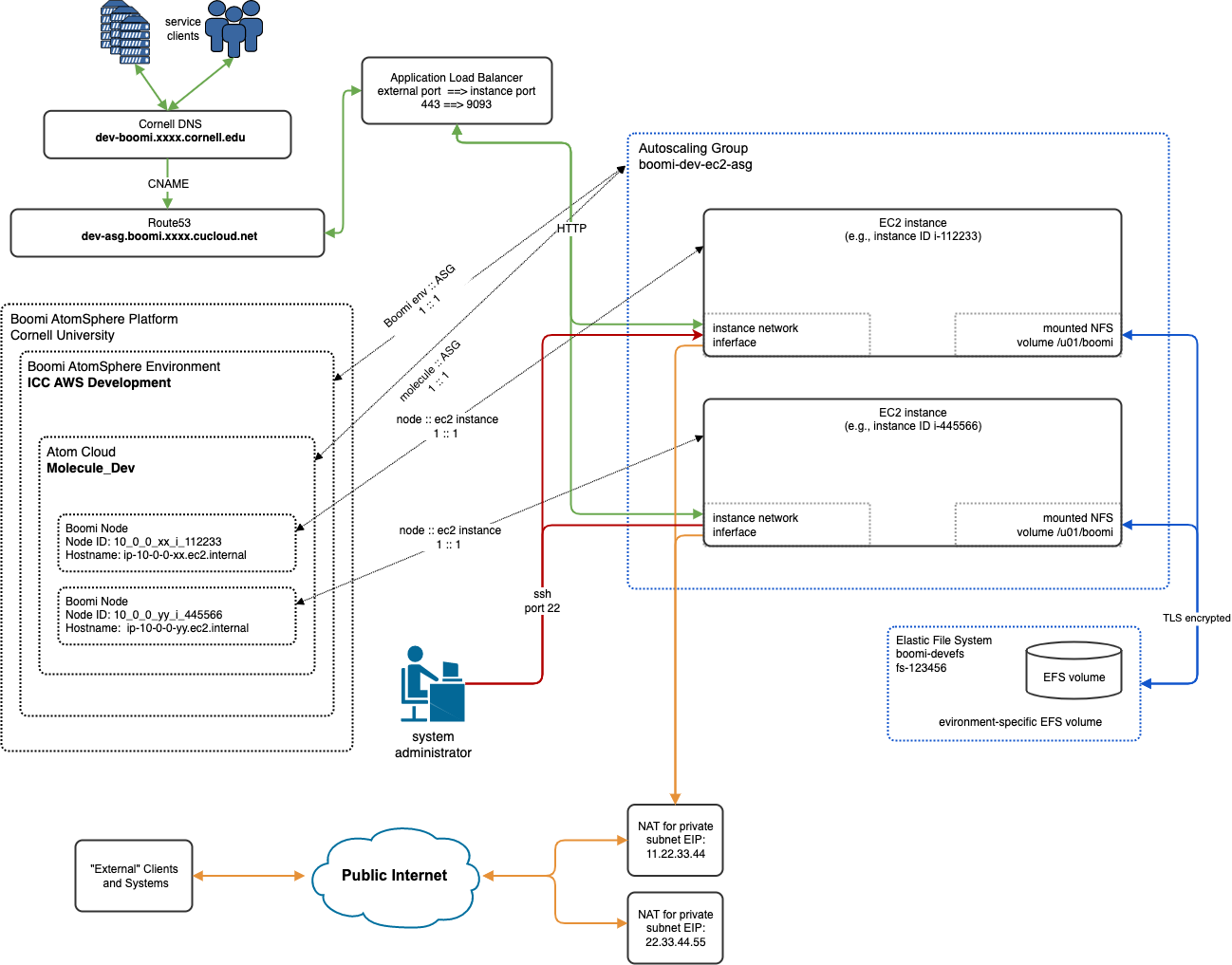

draw.io source: Boomi-ASG-Architecture.for-distribution.drawio

| Deployment | AWS Account | Environment Tag | VPC | VPC CIDR | cucloud.net Endpoint | cornell.edu Endpoint(s) | Endpoint Accessibility |

|---|---|---|---|---|---|---|---|

| dev | 123456789012 | dev | vpc-111111 xxxxxx-test-vpc | 10.0.0.0/22 | https://dev-asg.boomi.xxxx.cucloud.net | n/a | Cornell private network |

| test | 123456789012 | test | vpc-222222 xxxxxx-prod-vpc | 10.0.4.0/22 | https://test-asg.boomi.xxxx.cucloud.net | https://dev-boomi.xxxx.cornell.edu https://test-boomi.xxxx.cornell.edu | public internet |

| prod | 123456789012 | prod | vpc-222222 xxxxxx-prod-vpc | 10.0.4.0/22 | https://prod-asg.boomi.xxxx.cucloud.net | https://boomi.xxxx.cornell.edu | public internet |

| sandbox | 234567890123 | sandbox | vpc-333333 xxxxxx-sandbox-vpc | 10.0.8.0/22 | https://sandbox-asg.boomi.xxxx.cucloud.net | n/a | Cornell private network |

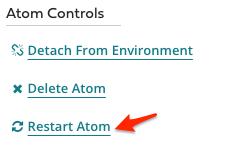

In the ASG-based Boomi environment, when the Restart Atom button is clicked in the Boomi web UI, each node restarts in a rolling fashion, similar to in the OpsWorks-based deployment.

The ASG-based environment does not have the same problem restarting nodes that occurred with the container-based nodes.

Additional detailed information about atom stop, start, and restart behavior is documented in Boomi Actions and Behaviors.

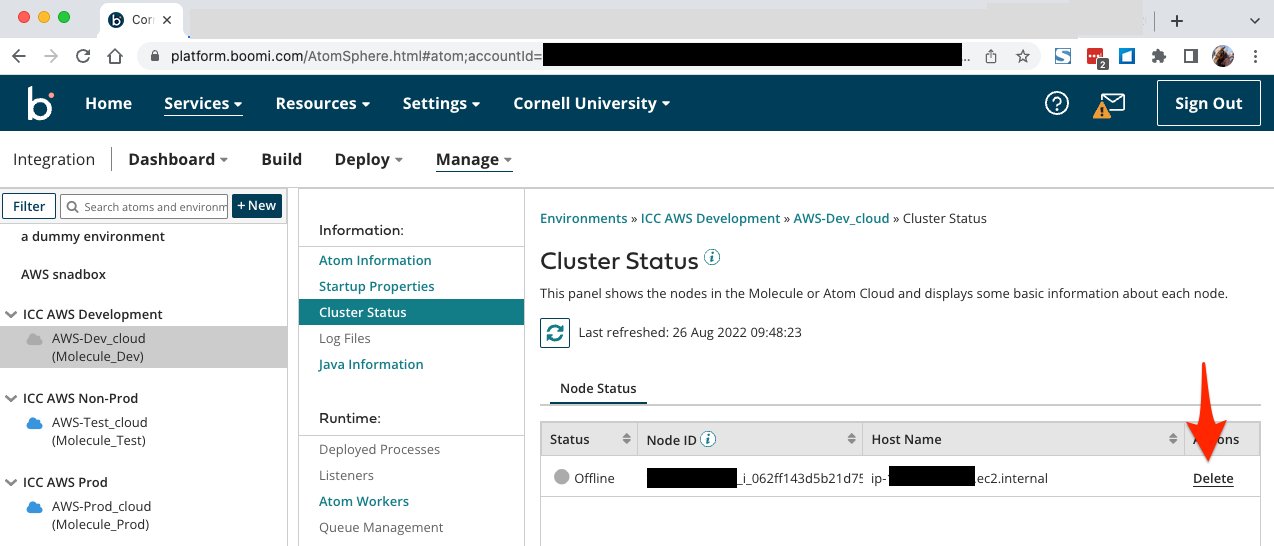

Boomi Node ID is derived from the AWS EC2 instance ID and IP address of the instance. The format of the Node ID is IPADDRESS_INSTANCEID, with "." and "-" replaced with "_".

E.g. The Node ID for the boomi node with IP address 10.0.0.xx running on EC2 instance i-062cdcdac0e5d4f29 is 10_0_0_xx_i_062cdcdac0e5d4f29.

Boomi Host Name is related to the private IPv4 address assigned EC2 instance for the node. E.g., Boomi node with Host Name ip-10.0.0.xx.ec2.internal has IP address 10.0.0.xx.

The Name of the EC2 instance reported in the EC2 console has form boomi-ENV-ec2 where ENV is one of dev, test, prod.

All EC2 instances for a given Boomi environment will be named the same. |

The data in this table reflect only the test and prod environments! The dev and sandbox environments use other VPCs and thus other CIDR blocks.

| Resource Type | Description | CIDRs | Notes |

|---|---|---|---|

| EC2 Instance | IPv4 CIDR of EC2 instances | 10.0.0.0/26 | |

| NAT Gateway | IPv4 CIDR of NAT Gateway used by Boomi EC2 instances | 11.22.33.44/32 22.33.44.55/32 | These public IPv4 addresses will be used when Boomi-nodes reach out to the public internet. |

| Load Balancers | The Load Balancers acting as the public front door to the Boomi web API interfaces. | 0.0.0.0/0 |

If Boomi clients need to whitelist these Load Balancers, they would have to do so using the domain name of the Load Balancers. I.e., test-boomi.xxxx.cornell.edu, boomi.xxxx.cornell.edu. |

Here is the rough timeline you can expect when new EC2 instances are launched by the ASG.

Total Elapsed Time minutes:seconds | What |

|---|---|

| 0:00 | CloudFormation stack update is submitted to increase the DesiredEC2InstanceCountParam parameter value. |

| 0:30 | ASG configuration is updated and the ASG launches a new EC2 instance |

| 5:30 |

|

| 5:40 | Boomi node on new EC2 instance is fulling running. |

| /home/boomi/local | working data local storage | This directory is local to the instance and is not accessible external to the instance. |

| /home/boomi | Boomi user directory | This directory is local to the instance is not accessible external to the instance. |

| /u01/boomi/ | root of shared molecule directory structure | This directory is mounted from a shared Elastic File System. At minimum all the instances that makeup the same Molecule will share this directory. However, other Molecules may as well, depending on how they are deployed. |

| /vat/tmp | Boomi/Java temporary directory | This is the Java temp directory, java.io.tmpdir. Configured in bin/atom.vmoptions. This directory is local to the instance and is not accessible external to the instance. |

These cron jobs are configured as part of the instance launch cloud-init process.

Whenever an instance running a molecule node is stopped or deleted, the Cluster Status page on GUI on the Boomi Platform will show a line for the defunct node with gray status (see screenshot below). It is always safe to use the pulldown action menu under the "gear" to "Remove from Table", thus cleaning up the cluster list.

There are a number of ways to restart a molecule – either a specific molecule node, or all the nodes in a molecule.

Level of escalation | What action is taken | What happens? | How to do it | Who can do it |

|---|---|---|---|---|

| 1 - minor/easy | restart all the atom processes on all molecule nodes | the atom service (Java process) on all the EC2 instances forming a molecule is stopped then started | Use Boomi Platform: "Atom Information" → "Restart Atom" | Boomi team members with access to the Boomi platform GUI |

| 2 | restart the atom service running on a particular node | the atom service (Java process) on the target EC2 instance is stopped then started | Login to the EC2 instance and sudo systemctl restart atom | Administrators, Cloud Team |

| 3 | reboot a particular EC2 instance | the atom service (Java process) is gracefully stopped (if possible), the instance is rebooted, the atom service is started | Use the AWS EC2 Console to find the target instance and reboot it. | Administrators, Cloud Team |

| 4 - major | deploy a replacement EC2 instance; terminate the problem instance EC2 instance | the new EC2 instance joins the molecule as a new node; the problem instance leaves the molecule cluster | See procedures in Managing Auto Scaling Groups section below. | Administrators, Cloud Team |

Boomi Molecules in AWS use a shared AWS Elastic File System (EFS), one EFS for production, and one EFS for dev/test. Integration team members can access these using SFTP, either directly from the command line or with the aid of a SSH/SFTP client.

See SFTP and Cloud Object Store Clients - OS X and Windows for a list of SFTP clients compatible with this SFTP system. Some of these clients allow systems to be integrated into native Windows or OS X file explorer interfaces giving a user experience similar to mounting a remote drive.

| SFTP endpoint: | sftp.xxxx.cucloud.net (port 22) |

| Allowed users: | Any user that is part of the CIT-boomi-adm AD group. This AD group is managed by the DBA/Integrations Team |

| Credentials: | standard Cornell netid and password (Two-Step Login required) |

SFTP EFS Directory Structure | Test Molecule Host EFS Directory Structure | Prod Molecule Host EFS Directory Structure |

|---|---|---|

| /cu-odaa/molecule_test | /u01/boomi | n/a |

| /cu-odaa/molecule_test/security | /u01/boomi/security | n/a |

| /cu-odaa/molecule_test/Cloud_Molecule_Test | /var/boomi/Cloud_Molecule_Test | n/a |

| /cu-odaa/molecule_prod | n/a | /u01/boomi |

| /cu-odaa/molecule_prod/security | n/a | /u01/boomi/security |

| /cu-odaa/molecule_prod/Cloud_Molecule_Prod | n/a | /var/boomi/Cloud_Molecule_Prod |

Containers running Boomi Molecules use the "boomi" user (uid = 1000) and the "boomi" group (gid = 1000). Molecule processes are configured to use umask 002 for files and directories they create. As such, default owner:group is boomi:boomi and the group will have the same privileges to as the boomi user.

SFTP users accessing files and directories have their user id assigned from Cornell AD, but are forced into group with id 1000 as their primary group when they connect to an SFTP system. This configuration, in addition to the umask and group id used by Boomi containers, should allow fairly SFTP users unhindered access to read and write files in the filesystem used by Boomi molecules. In addition, SFTP users have umask set to 002 as well, so that any group-level permissions set on files they upload should remain intact. Note that this configuration does NOT set read and write privileges for the group on a file uploaded if the file had only read privileges for the group on the source system. Some SFTP clients have features that allow privileges to be applied to files uploaded from the client system. Files uploaded to the EFS via SFTP will have group id set to 1000 since that is the primary group for SFTP users.

This section describes features available to the Boomi Team in the AWS Console. The team will login to the AWS Console using the shib-integrations role. (These same features are available to AWS Console users with the shib-admin role.)

Via shib-integrations, the Boomi team will have read-only access to the following features:

The direct URL to login is https://signin.aws.cucloud.net/.

Several key logs from ASG EC2 instances are captured to CloudWatch logs. They can be found in separate Streams within CloudWatch Log Groups named /boomi/asg/ENV.

CloudWatch Log Groups:

| Instance File | CloudWatch Log Stream |

|---|---|

| /u01/boomi/Cloud_MOLECULE/logs/*.container.ATOM_LOCALHOSTID.log | /INSTANCE_ID/IP_ADDRESS/boomi-container.log |

| /u01/boomi/Cloud_MOLECULE/logs/*.shared_http_server.ATOM_LOCALHOSTID.log | /INSTANCE_ID/IP_ADDRESS/boomi-shared-http-server.log |

| /var/log/syslog | /INSTANCE_ID/IP_ADDRESS/var/log/syslog |

| /opt/aws/amazon-cloudwatch-agent/logs/amazon-cloudwatch-agent.log | /INSTANCE_ID/IP_ADDRESS/cloudwatch-agent.log |

| /var/log/cloud-init-output.log | /INSTANCE_ID/IP_ADDRESS/var/log/cloud-init-output.log |

| /var/log/cloud-init.log | /INSTANCE_ID/IP_ADDRESS/var/log/cloud-init.log |

filter @logStream like '/i-0dc6faa3f8e3afaf5/10.0.0.186/' | filter @message like /(?i)Exception/ or @message like /(?i)Warn/ or @message like /(?i)Error/ or @message like /(?i)Severe/ | fields @timestamp, @message | sort @timestamp desc |

See complete CloudWatch Logs Insights query syntax in AWS documentation.

To connect to an instance using SSH:

ssh -i ~/.ssh/oracle.pem boomi@xx.xx.xx.xx |

All Boomi EC2 instances have Session Manager enabled. This feature uses the SSM agent running on the instances to provide shell access to the system. The user experience is similar to SSH access, but can be initiated from the AWS web console.

Session Manager sessions log you into the instance using the linux user named ssm-user. That user has sudo privileges so you can perform command using the root or boomi linux user.

The main Boomi process on EC2 instances is the atom linux service.

If you have an SSH/SSM terminal session into a Boomi EC2 instance you can use the following commands to manage the atom service.

The following phrases are used interchangeably in the text below:

The names for the CloudFormation stacks involved below still include ecs in the name even though, eventually, ECS will not be involved. It would be unnecessarily disruptive to destroy the old CloudFormation stacks and create new ones with more sensical names. |

| Setting to be Changed | What Controls This | Process to Follow |

|---|---|---|

| Root EBS Volume Size | RootEBSVolumeSizeOverrideParam parameter | (No template changes required.)

|

| EC2 Instance Type | InstanceTypeParam mapping |

|

| Number of Instances Running | DesiredEC2InstanceCountParam parameter | See the Managing Auto Scaling Groups section below. |

When managing EC2 instances that are part of an ASG, Scale-In Protection is sometimes useful to use. Scale-in protection is a per-instance setting that can be used to prevent an ASG from terminating an specific instance that you wish to remain untouched. In the Boomi ASG deployment, scale-in protection is initially off (or disabled) for EC2 instances.

A use case where scale-in protection may be useful is when reducing the total size of the ASG. If you wish to make sure that the Boomi cluster leader or a node that is running a long process isn't the instance chosen by the ASG to be terminate, you can set scale-in protection on the node(s) to be preserved. The ASG will choose other node(s) to terminate to reach the target ASG size.

If you set scale-in protection to protect instances from termination, be sure to remove the scale-in protect after the operation (i.e., ASG size reduction) is complete.

This is controlled by the DesiredEC2InstanceCountParam parameter in the relevant boomi-ENV-ecs CloudFormation stack.

The newly launched EC2 instance will automatically join the relevant Boomi environment/cluster.

If you wish to prevent the ASG from terminating particular instances, you can set Scale-in Protection on those instances prior to changing the ASG size. But, be sure that enough instances have Scale-in Protection disabled so that the ASG can reach the new target ASG size. E.g., if the current ASG size is 3 and the target ASG size is 1, then at most one instance can have Scale-in Protection set; otherwise, the ASG has no way to reach the target size.

If you set Scale-in Protection for any instances, it is wise to remove Scale-in Protection once the resizing process is complete.

If the ASG terminates the Boomi node that is currently the head/leader of the cluster, the remaining nodes will determine a new head/leader

ASGs can be configured to scale up or down automatically, based on metrics or based on time. E.g., the dev environment will have (an) instance(s) running on weekdays during normal work hours.

Additional automatic ASG scaling can be configured by the Cloud Infrastructure & Solutions Engineering Team (i.e., the CIT Cloud Team).

This process necessarily also retires the Boomi node running on the target EC2 instance.

In the Terminate instance? dialog, review the instance ID and name of the target instance. If correct, click on Terminate.

The newly launched EC2 instance will automatically join the relevant Boomi environment/cluster.

This process necessarily also retires the Boomi node running on all the replaced EC2 instances.

Using the default setting of 90% for Minimum healthy percentage and 30 seconds for Instance warmup leads to the ASG first terminating one (random) instance at a time and restarting its replacement before terminating the next instance. In once live test, we saw ~3 minutes in between the launching of new EC2 instances.

The prod and test EFS volumes are manually configured to be backed up twice daily.

There are two options for the scope of files to be restored from a backup:

There are also two options for the location of the restoration:

See AWS documentation for Restoring an Amazon EFS file system.

If at all possible, restore files to the original file system and copy/move files as needed.

If you decide to restore to a new EFS file system, there are several places in the CloudFormation-based deployment that will need to be updated with the ID of the new file system. At a minimum, restoring to a new EFS file system will require that new EC2 instances be launched for the environment where that has happened. In this situation, you probably will need to engage the CIT Cloud Team for assistance.

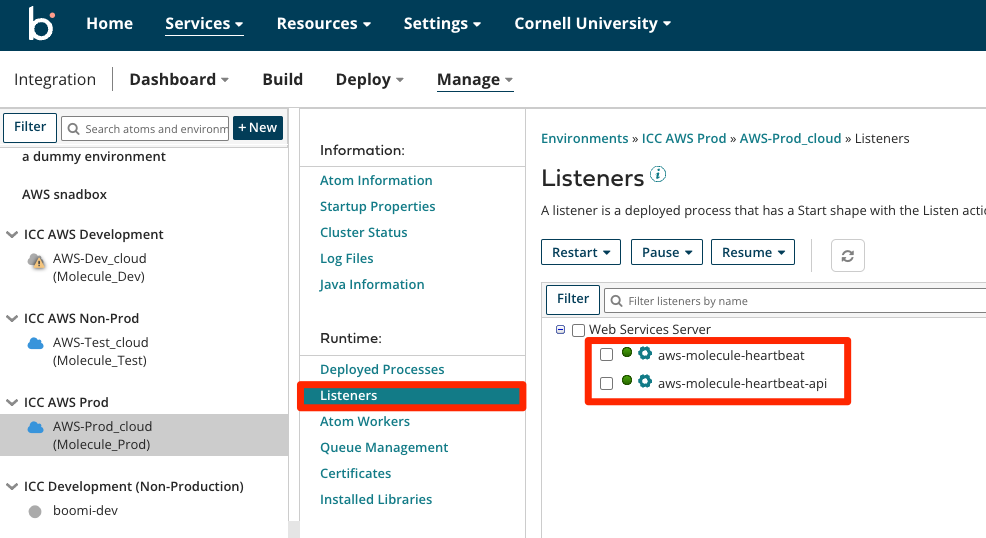

A simple custom Boomi heartbeat web service that can be deployed to specific environments in order to test proper load balancer, certificate, and port configurations. That heartbeat web service uses a simple process (aws-molecule-heartbeat) and simple API (aws-molecule-heartbeat-api). This process and API is little more than a REST version of "Hello World".

Deploy this API and process as needed for testing. It is not required to be deployed to an environment for normal functioning of the AWS ASG deployment.

| URL | |

|---|---|

| General Pattern | https://ALB-CNAME.boomi.xxxx.cucloud.net/ws/rest/aws-molecule-heartbeat/heartbeat |

| Production | https://prod-asg.boomi.xxxx.cucloud.net/ws/rest/aws-molecule-heartbeat/heartbeat |

curl --location \

--request GET 'https://prod-asg.boomi.xxxx.cucloud.net/ws/rest/aws-molecule-heartbeat/heartbeat' \

--header 'Authorization: Basic TOKEN_IN_BASE64' |