There are two parts to this assignment: the mandatory part, due Tuesday May 5th at 11:59pm on CMS, and the optional bonus part, due as a separate "assignment" on CMS Wednesday May 6th, 11:59pm. Submit what you have at least once by an hour before that deadline, even if you haven't quite added all the finishing touches. CMS allows resubmissions up to, but not after, the deadline. If there is an emergency such that you need an extension, contact the professors.

You may work in groups of one up to four, for the same reasons as have been explained in previous assignments. Your groups for the bonus part may be different than your groups for the mandatory part, so you will need to form (potentially different) groups for both the two "assignments" on CMS, using the handshake protocol described in previous assignments. (Do not forget to accept group invitations.) Please ensure that each member of the group can individually defend or explain your groups submission equally well.

Students may discuss and exchange ideas with students not in their group, but only at the conceptual level. As discussed in previous assignments, we distinguish between “merely” violating the rules and violating academic integrity. The way to avoid violating academic integrity is to always document any portions of work you submit that are due to or influenced by other sources, even if those sources werent permitted by the rules.

1 EM Primer

The EM algorithm can be used whenever we have a maximum likelihood estimation problem with hidden variables. The idea is as follows: Imagine we have a graphical model parameterized by θ ∈ Θ. (Generally θ will be a vector.) Say O represents the set of observed variables and H the set of hidden variables. The goal of MLE is to find the parameter that best explains the observation. Specifically, we would like to obtain:

θ∗ = argmaxθ∈Θ log(Pθ(O))

However, in many cases, even if we are given θ, taking derivatives of or simply even writing down Pθ(O) is hard, but if the set of hidden variables H were only revealed, calculating (derivatives of) Pθ(O,H) is simple. The idea in the EM algorithm is to start with an initial guess of the best parameter θ0 , and based on this iteratively refine the parameter we obtain, as follows: At iteration i, based on the old parameter θi−1 we calculate a distribution over the hidden variables given the observed variables O by setting

Qi(H) = P(H|O,θi−1)

The above is called the E-step. Next, given this distribution over the hidden variables we find the subsequent θi as follows:

θi = argmaxθ∈Θ ∑H Qi(H) log(Pθ(O, H))

The above is the M-step. We iteratively repeat these two steps till convergence (or till we are bored). Now notice that the E-step is simply doing inference in a graphical model. For the M-step, the term inside the log is now a joint probability that factorizes over our graphical model; this should make the optimization in the M-step easy (or at least easier).

2 The apple-doesn’t-fall-far-from-the-tree (ADFFFTT) model

In this question, we explore the possibility of making our own machine learning algorithm/model based on a generative story. We will specifically use a modified version of the apple-doesn’t-fall-far-from-the-tree generative story we used as one of the examples in class for clustering.

Here is the generative story: in our orchard there are two types of apple trees, the green-apple trees and the red-apple trees. Starting from day one, each day a new tree sprouts as follows. The parameters of our model are: a mixture distribution π over the K = 2 types of trees, a single variance parameter σ2 > 0 and locations μ1 , μ2 ∈ R2 of the first tree locations for each of the tree types. The way trees are generated by nature is given by the following procedure:

- On each round t, nature picks ct ∈ {1, 2} by drawing independently from the mixture distribution π. Red apples are picked with probability π(1), so green apples are picked with probability π(2) = 1 − π(1).

- Next, given the type of apple tree ct , the location of this new tree xt is distributed according to the normal distribution with covariance σ2I and mean given the location of the previous apple tree of type ct . That is, if ct was the red-apple type, then the location of this new red-apple tree is close to the location of the most recently sprouted red-apple tree, or specifically, normally distributed with a spherical covariance centered at the most recently sprouted red-apple tree.

- We need to specify the deal for the first red-apple tree (RAT) and the first green-apple tree (GAT), because neither of them have previous tree of the same type, by definition. So: the location of the first RAT (on whichever day it first occured) is distributed according to the normal distribution with mean μ1 and covariance σ2I, and similarly the location of the first GAT is distributed according to the normal distribution with mean μ2 and covariance σ2I.

After N days you visit the orchard for the first time and only notice the sprouted trees. Lucky for you, the trees have been tagged with their sprout order, but you can't distinguish the RATs from the GATs. Can you guess which sprouted trees are of the same types?

3 Questions

Q1 (Graphical model).

- Write down the parameters of the model we just specified.

- We want to write down a graphical model (Bayesian Network) that explains the ADFFFTT generative process. A direct approach would be to have observed variables X1,...,XN for the locations of sprouted trees and hidden variables C1, . . . , CN ∈ {1, 2} that specify the kind of each tree. You don’t need to write down this graphical model, but you do need to tell us in your writeup, explaining how you arrived at your answer: how many edges would such a model have?

We don’t need the exact number; big-O notation in terms of variable N will suffice.A better phrasing: you may ignore constants in your answer. - Now let’s try to simplify the graphical model by introducing some more carefully-chosen hidden variables. Specifically, let us introduce the superscripted and subscripted variables X11, . . . , X1N and X21, . . . , X2N where variable Xct indicates the location at time t (including the tree sprouted on day t) of the most recent tree of type c ∈ {1, 2}. Specifically,

- Given Ct =1, X1t ∼ N(X1t-1 ; σ2I), X2t = X2t-1 and Xt=X1t

- Given Ct =2, X2t ∼ N(X2t-1; σ2I), X1t = X1t-1 and Xt=X2t

Draw the graphical model for this formulation, and write down how many edges it contains, explaining how you derived this.

- Give the conditional probability of each of the variables in the graphical model given its parents.

- We would like to perform inference on this graphical model given the parameters, specifically we want to compute for every t the probability[footnote 1] pθ(X1t, X2t , Ct | X1,...,XN). Using either variable elimination or message-passing, write down how you would compute pθ(X1t, X2t , Ct | X1,...,XN) given the observations and some parameter (actually, a vector of parameters) θ . We are not looking for the exact form/calculations, or in other words, your answers can be computations in terms of pθ (Variables|Parents) and need not involve the actual form of these distributions and the actual integrals or sums and the Gaussian distributions (like we did for HMM’s in lecture). So, if you choose to do variable elimination, we would like to see what the m?(...) would be and what order of elimination you chose; for message passing, we would like to see equations giving the αs and βs as sums/integrals of products of stuff, but don't actually solve the actual integrals or sums.

(footnote 1: We are using little-p here to indicate that this “probability” could be a density function. But for you, feel free to use integrals, summations, whichever — we aren’t asking you to be particular about when dealing with a discrete vs. continuous distribution.)

Q2 (EM Algorithm).

Since in the previous question we asked you to give us the exact forms of the conditional probabilities for each node, for this question, you can just write these probabilities as Pθ(some variable|some other variable) (i.e., don’t expand them any further: so you’re only talking about the graphical structure, not the specific distributions.) We would of course like to find/learn the parameters, based on the observations, via EM. We’ll take the unconventional approach of starting with the M-step, for reasons explained later.

For the M-step what we would like to do is find, given the Q distribution,

θi = argmax θ∈Θ ∑ H Qi(H) log(Pθ(O, H)) (equation 1)

- Write down the set of hidden variables H and the set of observed variables O for this problem/graphical model.

- We want to simplify log(Pθ(O,H)) in Equation (1) before we attempt to perform the M-step. The nice thing about graphical models is that the joint distribution factors according to the graph. Use this fact and simplify log(Pθ(O,H)) to be of the form:

log(Pθ(O, H)) = ∑Nt=1 Some termst

What are the terms in the above summation? Give them in your writeup. Again we don’t care about the parameterization, so write your answers in terms of Pθ (Variables|Parents) rather than expanding such terms out further. - Using the above and swapping summations as in lecture, we can write

∑H Q i(H) log(Pθ(O, H)) = ∑Nt=1 ∑H Qi(H) Some termst

In the above, for every t, note that Some termst only involves a (small) subset of the terms of the graphical model. Hence, by marginalizing over terms not appearing in Some termst , we can write, for each t,

∑H Qi(H) Some termst = ∑Ht Qi(Ht ) Some termst

(In the above, the “Some terms” on the left- and right-hand sides of the equation are indeed the same.)

Write down what these variable sets Ht’s are. Again, we don’t require you to expand out Pθ(Variables|Parents) terms.

The point of this question: The reason we did all this, including “starting (our analysis) with the M-step” is: based on the above simplification for the M-step, we see that in the E-step we don’t need the entire Qi(H), but only terms Qi(Ht)’s. Aren’t you proud?

Following are bonus questions; solving them will provide you with “karma” points that we will factor into our final letter-grade determinations. You do thus do not need to attempt them. If you choose to submit solutions, do so on the separate CMS assignment for the bonus questions, and make sure both your bonus and your mandatory solutions are both stand-alone (that is, can be graded independently of each other).

Also, remember that you need to regroup (and are allowed to form different groups) for the bonus separate CMS assignment. We will not automatically transfer groups over.

Q3 (Bonus Question).

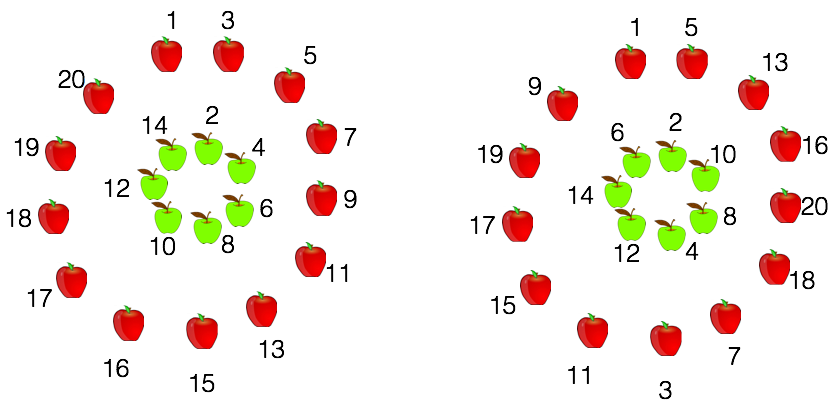

Say we are given two sets of observations of 20 points each. The two sets are depicted in the figure below, with the following hidden information revealed: which trees are RATs and which are GATS.

Both sets of data points have trees in the exact same locations. However, the order in which they occur in the left side figure is different from the ones on the right. Now if we were to run the developed EM algorithm for data set one and data set two, we would get very different answers. This is unlike k-means or single-link clustering algorithms where for both data sets we get exactly the same clustering. Why is this, and what would be the likely cluster assignment for the data in the left and in the right? Explain your answer

Q4 (Bonus Question [for the slightly brave ones!]).

- Write down and derive the full inference algorithm/pseudocode for the E-step.

- Write down the full derivation and update form for the M-step.

- Implement the derived EM algorithm and submit your code as a zip file(please include comments indicating the main steps).

Here is a link to a page where you can view "diffs" between any two versions: use the "compare selected versions" feature to highlight precisely what text was added or deleted.