Lillian Lee, Choice 2019 Symposium "Wisdom from Words: Insight from Language and Text Analysis", draft/work in progress

This URL: https://confluence.cornell.edu/display/~ljl2/Choice2019

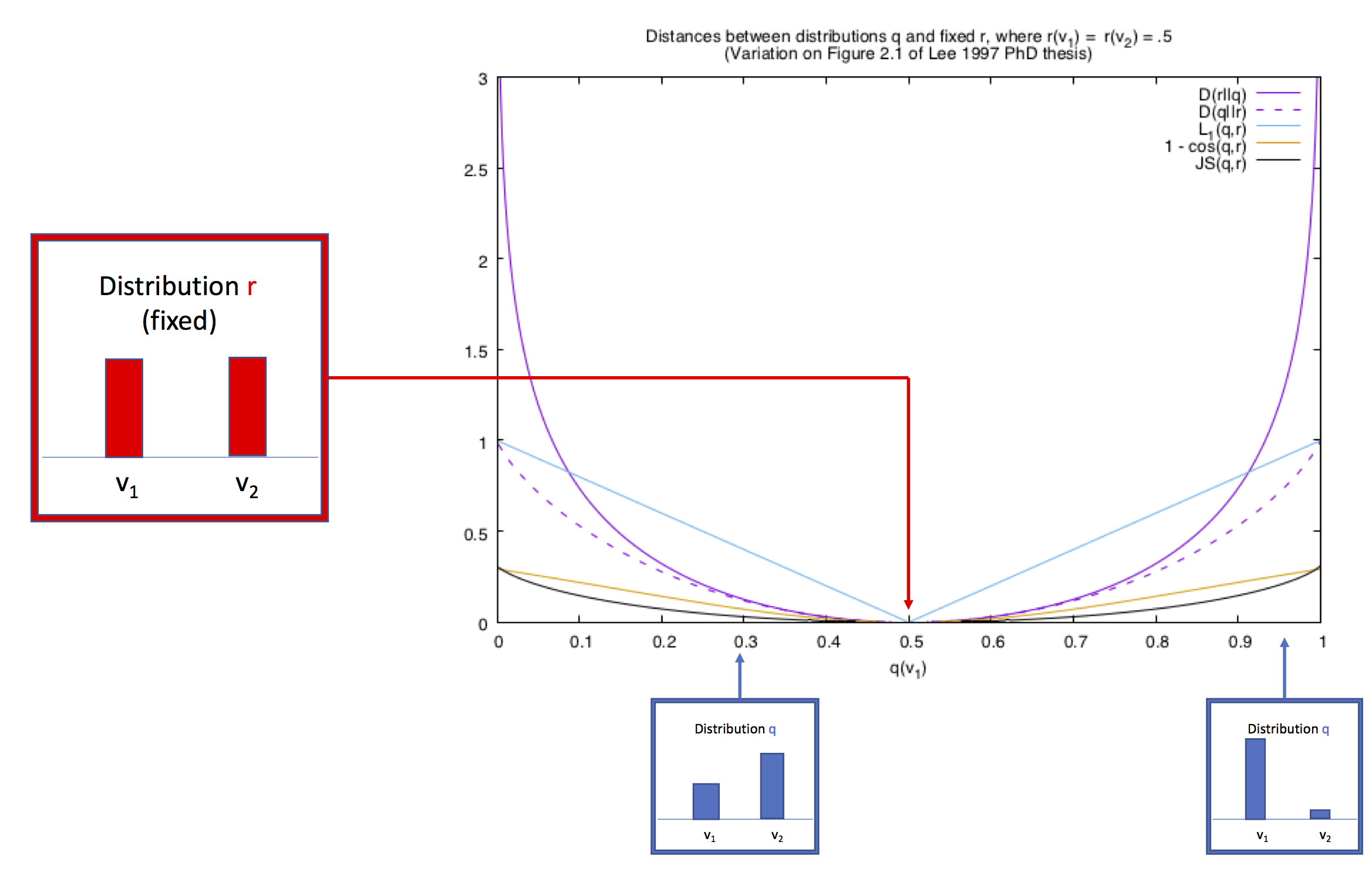

Setting: what makes language type A different from type B?

Examples my co-authors and I have worked on:For various reasons, including an eye towards deploying applications, we ultimately evaluate our hypothesis with prediction even though we are personally interested and invested in understanding what underlies the phenomenon being considered.

| Expand | |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| |||||||||||||

For various reasons, including an eye towards deploying applications, we ultimately evaluate our hypothesis with prediction even though we are personally interested and invested in understanding what underlies the phenomenon being considered.

|

Some

...

features/technologies I like

| Expand | ||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

The Cornell Conversational Analysis ToolkitFeatures for: linguistic coordination, politeness strategies, conversation motifs, conversation graphs Datasets: Wikipedia talk page conversations that (do not) become derailed by personal attacks; dialogs from movie scripts; UK Parliamentary question-answer pairs; Supreme Court oral arguments; Wikipedia talk pages conversations; post-tennis-match press interviews; reddit conversations. | ||||||||||||||||||||||||||||||

Chenhao Tan's list of hedging phrases, such as "I suspect", "raising the possibility":This is in the long line of LIWC-like lexicons. [README] [list itself]

Language models, which assign probabilities P(x) to words, sentences or text units after being trained on some language sample.These are great for similarity, distinctiveness, visualization.

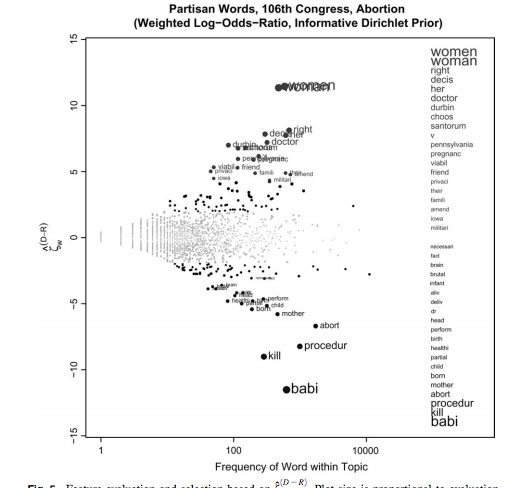

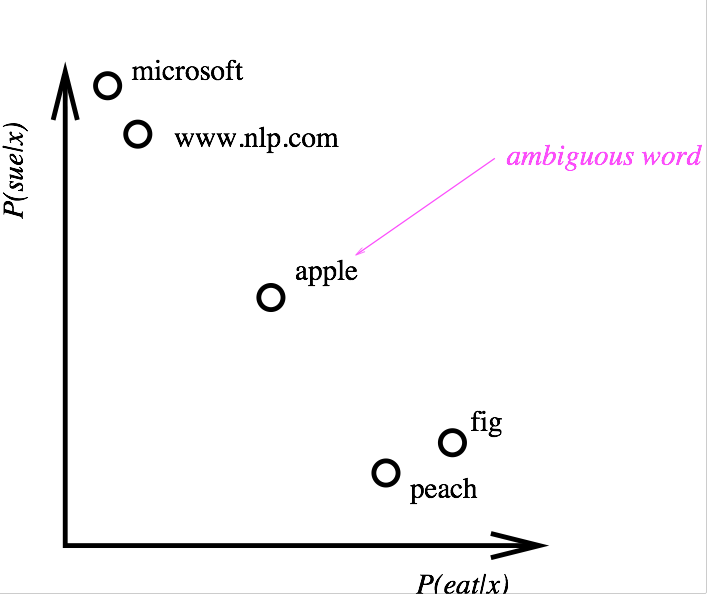

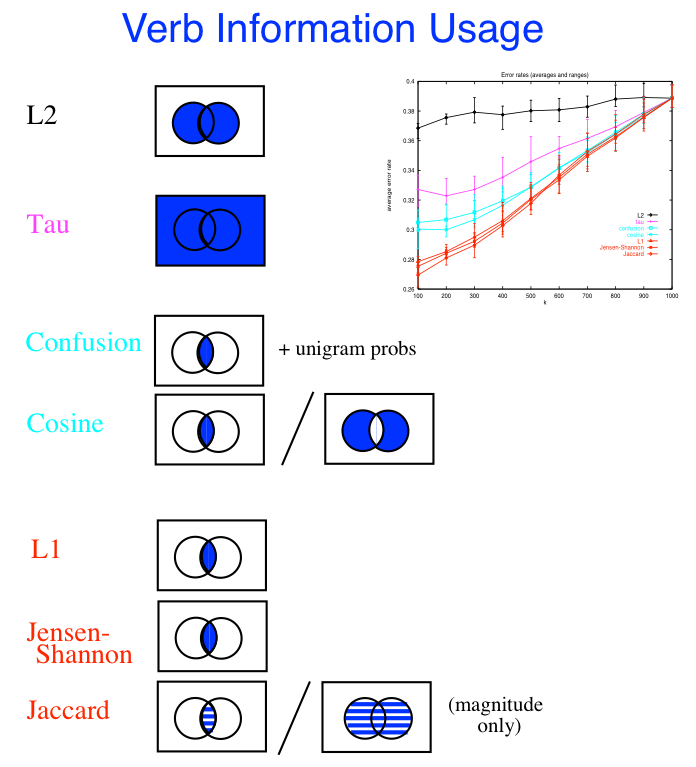

Distributional similarity (word embeddings are the modern version)Here's a figure from 1997 about ideas from the early 90's: For references, see the word embeddings section later in this document

|

...

| Expand |

|---|

It represents an intuitively slightly ridiculous null hypothesis that often works surprisingly well as a feature, most likely because it correlates with a lot of other features of interest. Examples: (to be inserted) of other features of interest. Examples: (to be inserted) |

A feature-effectiveness test that's caught my eye

Wang, Zhao and Aron Culotta, When do Words Matter? Understanding the Impact of Lexical Choice on Audience Perception using Individual Treatment Effect Estimation. AAAI 2019. [code]

How do we proceed during the age of deep learning, where, for prediction, we don't need to (aren't supposed to) worry about features anymore?

| Expand | |||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

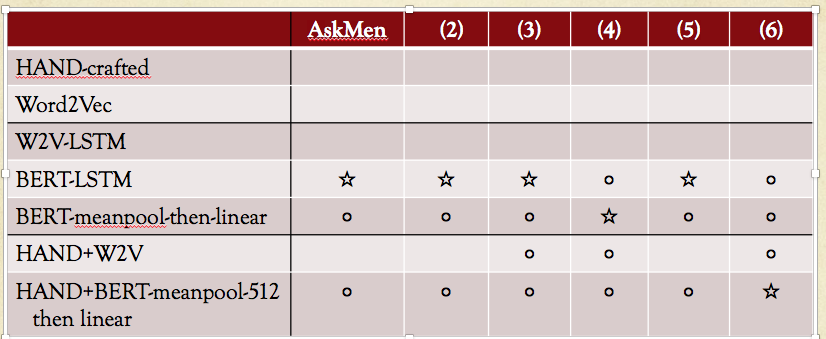

Comparison of hand-crafted features against deep learning on predicting controversial social-media postsstar = best in column; circle = performance within 1% of the best in column. Columns: different sub-reddits.

Question/proposal : where is the word embedding version of LIWC? ("Can we BERT LIWC?").

Language modeling = the bridge?Note that the basic units might be characters or unicode code points ("names of character") instead of words.

|